Autonomous chat

Designing human oversight at scale

When AI handles the conversation by default, the design problem is no longer the reply. It is oversight, intervention, and accountability.

Opening

In most systems, humans stay in the loop. But as model capabilities increase, that loop becomes a bottleneck — humans reviewing every message the AI could handle on its own.

I designed autonomous chat as a system where AI handles conversations by default, and humans enter only at the boundary.

Autonomy Spectrum diagram

The shift

When we shipped Recommended Response, I expected experts to use it cautiously.

Instead, I saw a pattern:

heavy edits at first

then progressively lighter edits

eventually, selective trust

Even when experts rejected the suggestion, they still replied faster.

The AI had become a cognitive anchor.

That raised a harder question: if AI can already handle parts of the conversation reliably, why is the expert still required to touch every message?

The decision

No one knows the right model for human-AI collaboration at L4. The design work is in exploring the space honestly and reasoning through the tradeoffs.

I explored two conceptual directions for the expert's role.

1. Sequential takeover.

AI handles the conversation until it flags a risk. The expert takes over and finishes the conversation entirely. Clean ownership, simple mental model — but the system doesn't change. One expert is still tied to one conversation at a time.

2. Concurrent supervision.

AI handles the conversation until it flags a risk. The expert steps in for the critical moment — then returns control to AI. The expert stays across many conversations. The system becomes a loop.

In both models, the default is the same: AI handles the conversation end-to-end. Most conversations never require a human. The question is what happens at the edge — and how often the expert gets pulled out of supervision into execution.

The Two Loops diagram

I chose concurrent supervision. Not because it was simpler, but because it changed the economics of the edge case.

The deeper reason was structural: in a service system moving toward autonomy, the expert's value is judgment at the boundary, not coverage across the whole surface. Sequential takeover preserved the old model's shape while adding AI. Concurrent supervision redesigned the job.

Concurrent supervision also changes what accountability means — from depth on one conversation to breadth across many. That's a real cost the system has to earn.

I wasn't designing a feature. I was designing the role of the human in the system.

Exploring the interaction models

The control loop defines what the system does. But the expert still needs to experience it — scan a fleet, enter a conversation, act, and return. No one knows what that should feel like yet either.

I explored three interaction models.

Channel list

Sidebar queue grouped by severity, full conversation panel, AI context panel. The expert handles one conversation at a time with full depth — complete history, AI reasoning, customer data — without switching views.

Maximum context per conversation. But zero fleet visibility while working a case.

Best for high-stakes, low-volume queues where getting the answer right matters more than speed.

Whack-a-mole

Conversations pop forward as they need attention. The expert resolves one, dismisses it, and the next surfaces. Spatially opinionated — there is only ever one active case in view.

Low cognitive load per action. But no sense of the queue behind the current case.

Best when volume is low enough that a single focused feed is the system's actual shape.

Monitor grid

A dense card grid with fleet-level metrics. The expert sees twenty or more conversations at once. Flagged chats open in a floating window scrolled to the decision point.

Maximum fleet visibility. But the floating window covers the grid underneath — fleet awareness drops during intervention.

Best for high-volume surveillance where most cases are AI-handled.

The recommendation

I recommended the channel list. It's the only model that supports both at once — deep intervention in one conversation while the fleet state remains visible in the sidebar.

The monitor grid optimizes for scanning, but the floating window covers the grid during the exact moment fleet awareness matters most. The whack-a-mole collapses to a single case. The channel list keeps the expert in a conversation and across the fleet at the same time.

The whack-a-mole is the most spatially opinionated — worth revisiting once usage data reveals typical queue sizes. The monitor grid is a future state for very high autonomy ratios, where one expert oversees hundreds of sessions and scanning dominates the job.

The flagging taxonomy

Across all models, the system needed to communicate not just that intervention was required — but why.

A confidence score was less helpful — it's hard for an expert to interpret “78% confident.” Instead, I introduced three categories.

Paused — hard flags, AI stops, expert required.

Watch — soft flags, AI continues, expert monitors.

Healthy — no flags, AI handling, no attention needed.

The taxonomy is not just a model output. It is a product decision about where human judgment belongs. The thresholds were set with the service delivery team and compliance — and designed to evolve over time.

The intervention moment

When the expert clicks into a paused conversation, the system shows the flag and AI's reasoning — “Why AI paused” with specific risk tags — in the context panel.

A divider marks the transition to human: “You are in control, AI is standing by.”

Below it, a suggested reply — grounded in the conversation and the customer's record — ready to discard, edit, or send. The expert reviews, makes a judgment, and acts.

Sometimes the situation requires a different direction entirely. The expert can trigger /guide to steer AI's response. Whether they're editing AI's draft or steering AI from scratch, they never start from zero.

When the situation is stable, the expert hits “Resume AI.” They only chime in for the critical moment that requires human judgment.

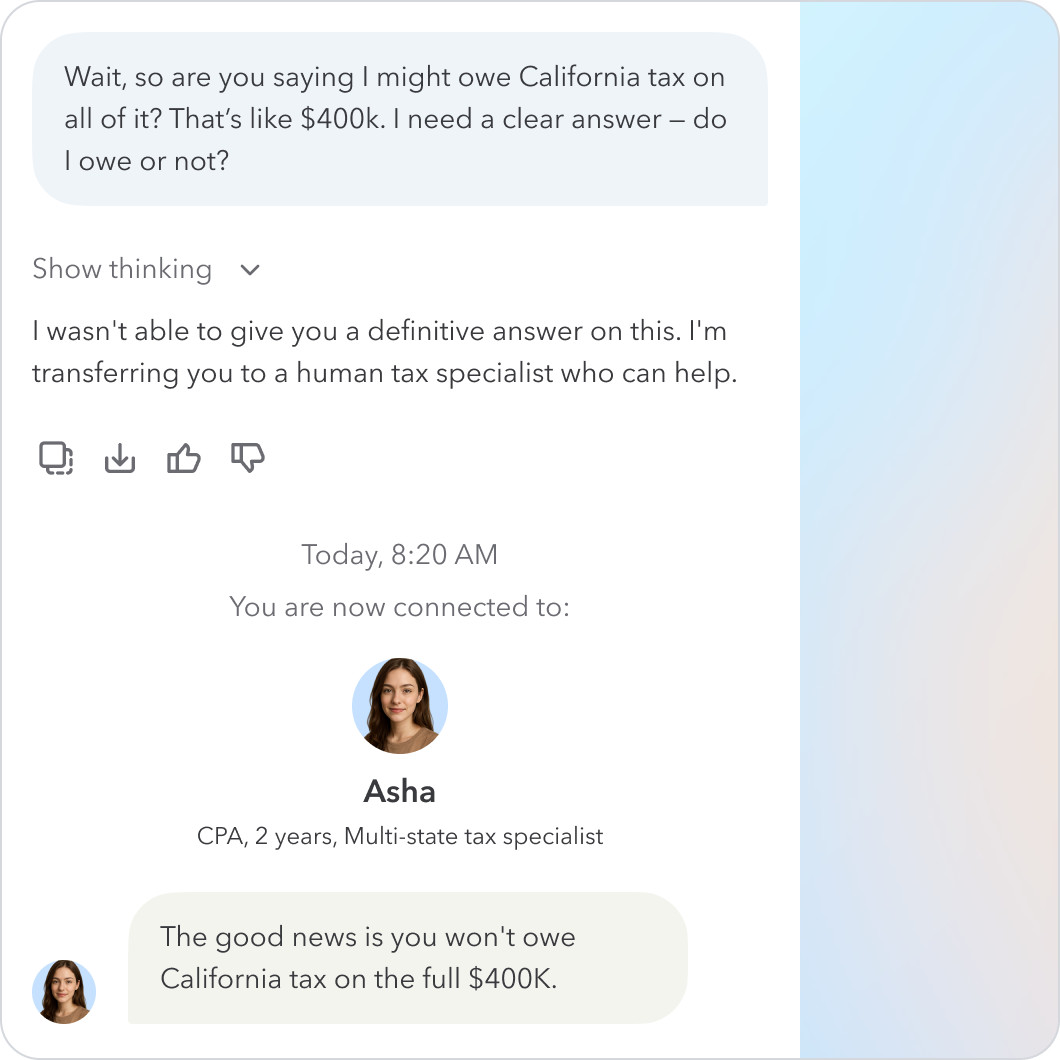

The seam

Should the customer know when an expert steps in?

I explored three models.

Invisible transition

The customer converses with a single entity throughout — no trace of the expert on the front end, even when they're the one responding behind the scenes.

Seamless in theory, but the delayed response when an expert steps in felt odd. The seam is invisible, but the pause is not.

Full disclosure

The system announces its limitation and hands off to a human explicitly. Honest — which matters in a high-stakes financial workflow. But it makes the conversation feel jarring.

Every transition breaks the flow, and the customer is left wondering whether to trust what the AI said before the handoff.

Soft signal

A response with a “Reviewed by Asha, CPA” stamp. The customer knows a specialist is present, and the conversation feels seamless — no major UI change, just one added layer of human approval.

I chose the soft signal. In a regulated, high-stakes financial conversation, trust depends on knowing who is accountable.

The customer needed clarity at the moment that mattered.

Final thoughts

Designing for L4 made a distinction that L2 had obscured: AI confidence is not the same as AI competence.

In assisted systems, humans compensate continuously. In autonomous systems, the system must surface its own limits before they cause harm.

The control loop — not the model — is the product. Designing how a system exposes its limits, and how humans act on them, is one of the central problems of AI systems.